At the WWDC conference this year, Apple announced their long awaited headset computing device, the Vision Pro. I've long been interested in augmented reality so... couldn't resist writing up some initial thoughts.

As widely acknowledged, the Apple Vision Pro is a very impressive set of interconnected technologies building on Apple's years of advanced silicon, operating system, display, camera, etc. development. It reminds me of this quote from Jony Ive:

There's the object, the actual product itself, and then there's all that you learned. What you learned is as tangible as the product itself, but much more valuable, because that's your future. Jony Ive, 2014

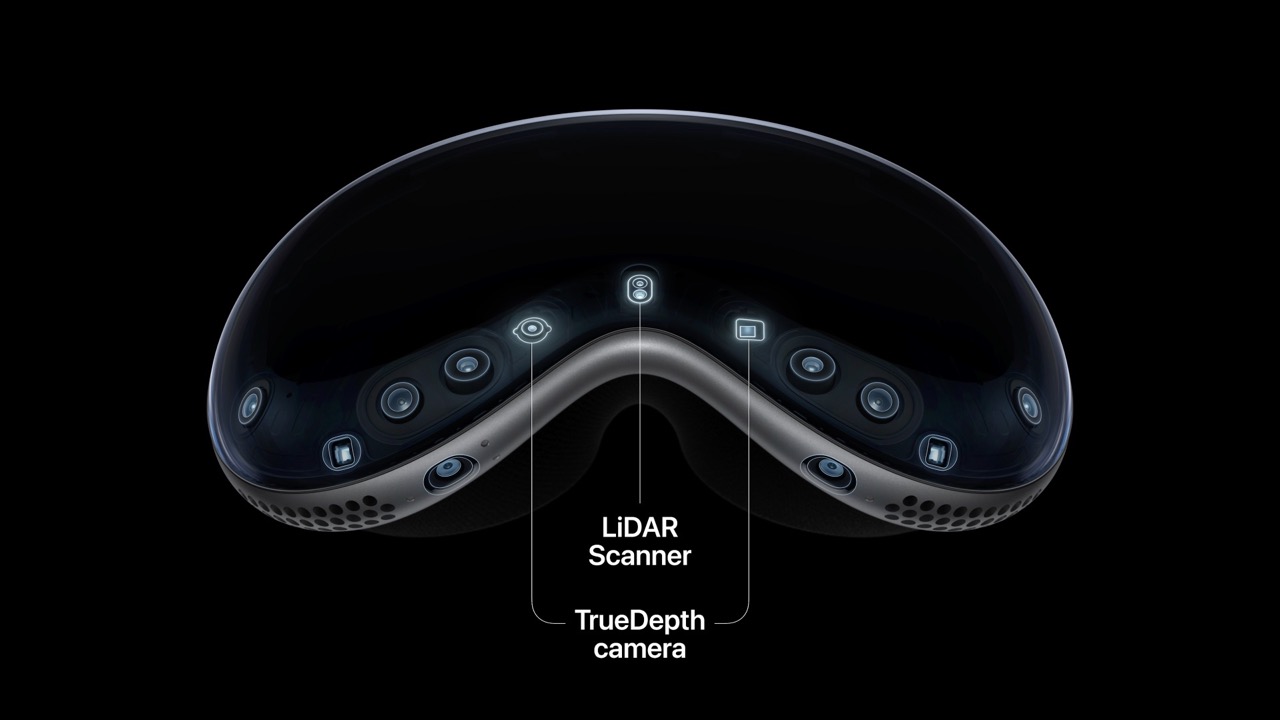

Apple's device of the future currently features:

- 12 cameras, five sensors and six microphones

- 2 cameras, a lidar scanner and a TrueDepth camera track the outside world

- 2 cameras point downward track your hands

- 2 IR cameras and a ring of LEDs to track your eyes

- 23M pixel micro OLED that fits 44 pixels in the space of an iPhone pixel

- 2 speakers next to your ears with raytracing for spatial audio

- A M2 chip and new R1 chip designed for real-time processing of outside world

- 5,000 patents filed over the past few years

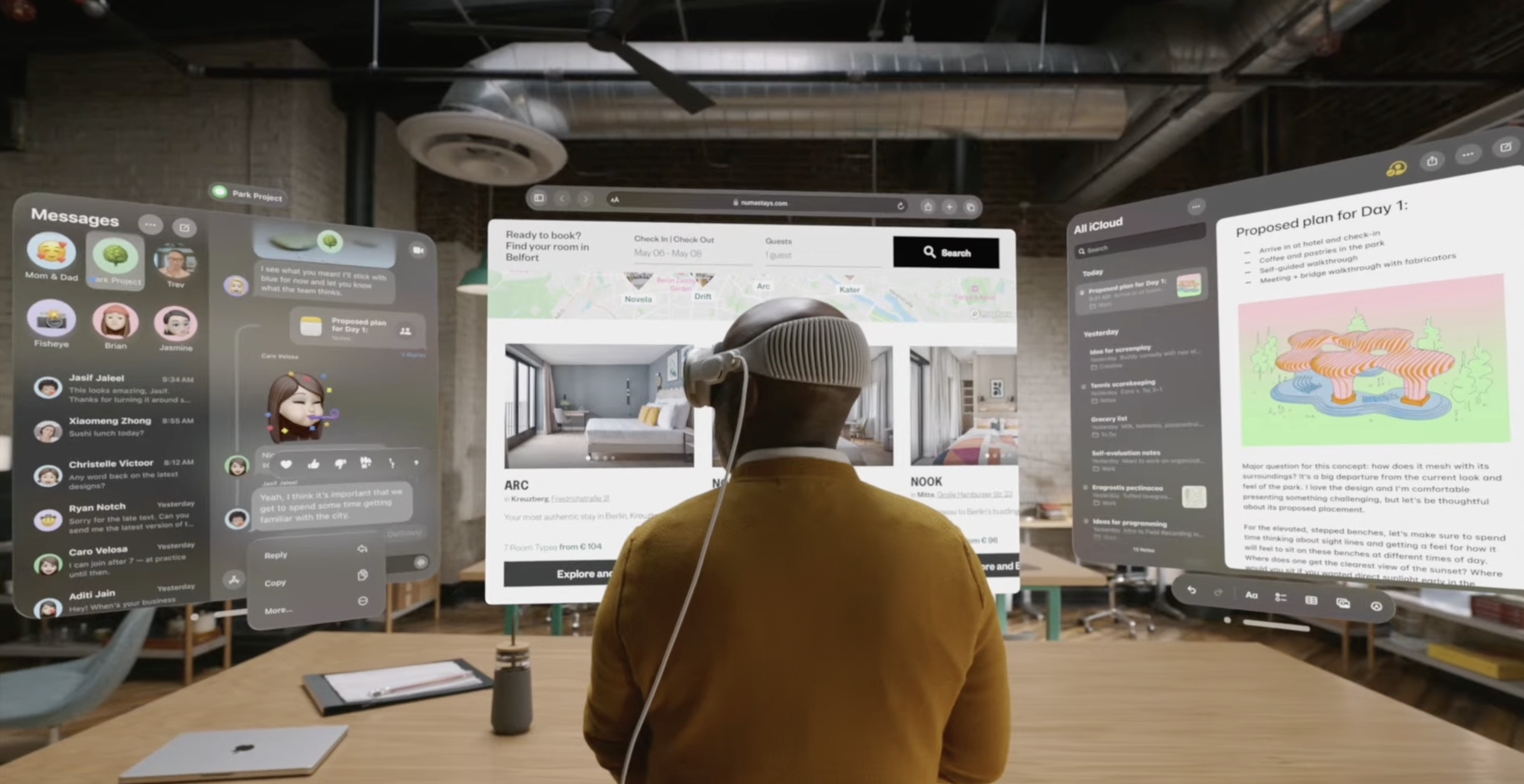

So what does all that technology add up to? Apple's Spatial Computing walkthrough leaned into examples that made the most of the immersive display and allowed people to apply new interaction paradigms (made possible through eye and hand tracking) to common use cases like: viewing photos & videos, extending displays, video conferencing.

This lean into the familiar was explicit in Apple's marketing as well:

"Apple Vision Pro brings a new dimension to powerful, personal computing by changing the way users interact with their favorite apps, capture and relive memories, enjoy stunning TV shows and movies, and connect with others in FaceTime."

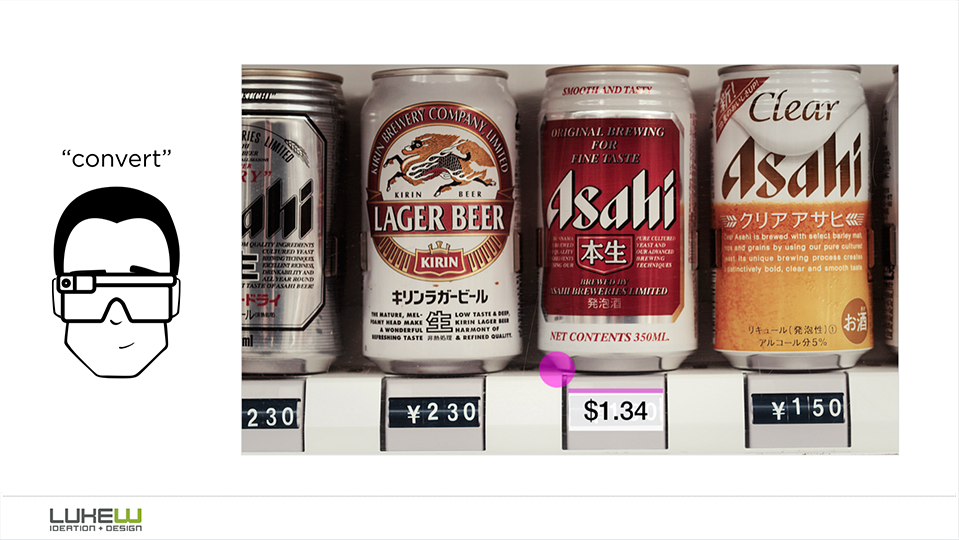

While the hardware certainly appears capable of more than just placing app screens in your real world environment, very few examples (if any) showed interactions with real world objects. Personally, I've been eager to see these kinds of possibilities brought to life:

Why were these kinds of use cases missing in Apple's narrative about the Vision Pro?

- They could be non-use cases. They seems compelling but when you actually design for and with the Vision Pro, things like media viewing could be much more relevant.

- They could still be difficult. There's lots of objects around you, selecting them is hard, and acting on them requires services not built yet.

- They could be too unfamiliar. At the introduction of an all new device and computing environment, leaning into known use cases is a bridge for everyone.

But with VisionOS and the Vision Pro device making its way into developer's hands next year, we'll likely see what's actually compelling soon enough. As Sam Pullara put it:

"VisionPro looks like the device to figure out if making it smaller, lighter matters. It has all the possible hardware to make great experiences if they exist."

I remain naively excited to find out...